SEO of Database-Driven Websites

Problems and solutions in optimizing a database-driven website for Google

There are problems to overcome when optimizing a website for good search rankings, where the pages of that site are created from pieces pulled from a database. If you use php, cgi, Cold Fusion, Microsoft ASP, or various proprietary shopping carts like xCart—these make the kind of web pages where the URL (as you see it in your browser's address bar) contains question marks ("?"), equal signs ("=") and other symbols. The links within this kind of website don't go to existing html pages. The links are set up so that when you click on them, the pages are created instantly for you from information and HTML code stored in a database on the server. It is all put together for you instantly "on the fly" when you click on the link.

Basically, search engine spiders aren't all smart enough to figure out how to interact with a database to create those pages, so sometimes they never make it past the first page of the site. While indexing your site and trying to follow the links from your main page, if a search engine spider finds a question mark in the URL that you are linking to, especially if it references a "sessionID", the spider may disregard that link and move on. Session IDs are particularly troublesome to search engines because every time a search engine spider visits that website, it will see a unique URL with a unique session ID in it, and will index the home page (which has a unique session ID as part of its url) newly, store it away, and so end up with another copy of the home page in its index every time it crawls the website.

Which is another good reason to have a canonical tag at the top of every web page, so Google knows which page it has just crawled, no matter what its URL says it is. It is especially important for database-driven websites to have a canonical meta tag on every page it serves up.

This is not a new problem! It's been around since web developers first started making websites using databases.

Think of it this way: Which page name gives Google enough information to figure out what it is about:

- https://yourwebsite.com/product-display.php?productid=72&productstyle=33&productsize=42, or

- https://yourwebsite.com/letter-sized-envelopes/

None of the search engines can interface directly with your database and read what is in it—they simply aren't capable of doing that.

If you have a database-driven site then special actions need to be taken handle the behavior of the bots that crawl the website. Here is an outline of some possible solutions:

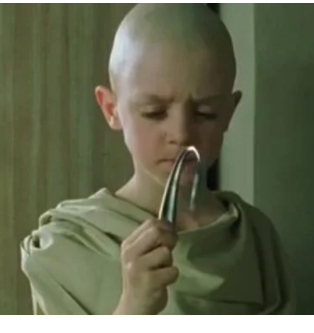

Using ModRewrite to Make Search Engine Friendly URLs

If you can't set up static pages which then link to your database, never fear. You can still optimize the site using ModRewrite in Apache. See my ancient blog post which goes into detail on how to use the .htaccess file to make Search Engine Friendly URLs. (Also titled "There is no Spoon.") That article gives some examples and you may be able to implement this easily. Most of the pages of the website you are looking at now (including this one!) use ModRewrite to help assemble the page from bits stored on the server.

If you are trying to optimize a database-driven site, you can contact us for an inspection and suggestions about what else can and should be done. We've seen some beautifully designed websites—very pretty, interactive and doing a good job of selling products—which were invisible to Google. You have to HELP Google find and index your content properly.

Making Static Category Pages

You can set it up so the main (home) page of the site links to several static pages (which will need to be created if they don't already exist) which then interact with the database. It helps to have about a dozen of these static (non-changing) pages: these are plain old .html pages which are NOT created by the database "on the fly". Typically they would describe the main categories of what you are selling. The links FROM these "category" pages can create other pages based on information pulled from the database, but these static category pages themselves should not be created newly every time someone clicks a link from the main page of the site.

Each of these static category HTML pages should then be optimized using the appropriate key word phrases for that category. These static HTML pages will then be picked up and indexed by the search engines. You stand a much better chance of getting good search engine rankings for your website by creating and optimizing some static pages like this.